Bolide

automous car race

In 2024, ENS Paris Saclay organized a new edition of its robotics competition, during which teams of students from different schools (ENS, Centrale, ENSTA, Institut Villebon Charpak, IUT de Cachan, etc.) competed in an autonomous car race on a standard track of unknown shape. The vehicle is identical for all teams (it is the chassis of a remote-controlled car) and can be equipped with multiple sensors (LIDAR, cameras, etc.) to perform the task required: complete 3 laps of the track as quickly as possible, avoiding the other cars and in the shortest possible time. The vehicle must operate completely autonomously: once the race has started, no control communication to the vehicle is allowed.

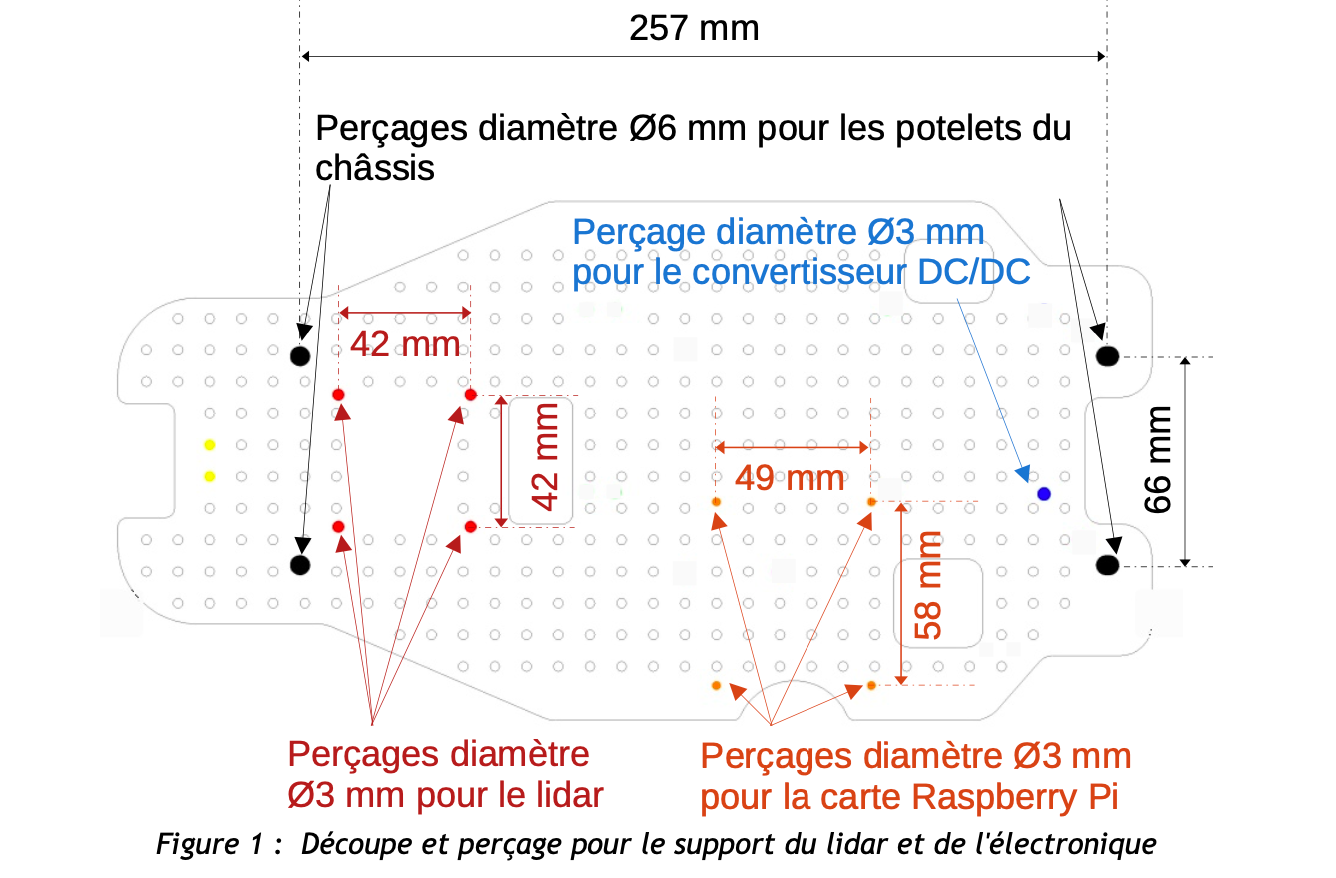

The architecture selected is based on ROS, and enables real-time control of the vehicle’s on-board electronics (speed control, perception of obstacles via “time of flight” sensors, LIDAR, etc.).. Our priority has been to document the prototype capabilities and handling, and to work on new navigation algorithms based on the vehicle’s perceptive capabilities. In particular, we’ll be working on the vision part of the system, which is still largely under-exploited, to perform all or part of the tasks involved in obstacle detection, navigation and so on.

We have studied different approaches towards obstacle detection and navigation: Including a deep learning approach (segmentation, to determine the type of obstacle and the procedure to follow) as well as SLAM and control. Here is the link to our project.